Uncertainty Quantification And OUter-Loop Applications

The goal of uncertainty quantification (UQ) is the characterization, identification, management and reduction of uncertainties in computer-based models and engineering systems. By using UQ, it is possible to calculate how likely certain results are, if certain aspects of the system or model under investigation are either not completely known, subject to stochastic fluctuations, or only incomplete information is available for certain aspects of the system. The use of computer-based models in the product development cycle is very common in many industries. In contrast, uncertainty or incomplete information is often difficult to understand and thus almost always neglected, as their consideration in complex computer-based models requires a high degree of statistical know-how and specific algorithms. Moreover, many of the traditional approaches for UQ require a very large number of model evaluations. In recent years, the field of UQ has developed into a rapidly growing interdisciplinary research area at the interface between applied statistics and data science, machine learning and classical engineering.

Our solution for you:

AdCo EngineeringGW bundles know-how from statistics, machine learning and engineering to provide innovative algorithms and software for the quantification of uncertainties. Since some of these very powerful methods only require information about input and output variables, these approaches can be applied to a variety of problems. In fact, a certain method may work well for both engineering systems and problems in the financial industry.

Quantification of uncertainties offers many benefits, among others,

- The understanding of systemic uncertainties,

- More precise predictions,

- Predictions of system responses over the entire range of the uncertain parameters,

- The quantification of confidence intervals for predictions of computational models,

- The calculation of optima that are robust against variations of the input parameters, and

- The reduction of development time, costs and the number of unexpected failures

MATHEMATICAL MODELING OF UNCERTAINTIES

- Simple probabilistic models such as random variables are often insufficient for describing uncertain input parameters.

- Using multidimensional stochastic fields, we can take spatially and temporally correlated random quantities into account in a model.

- The consideration of cross correlations and non-Gaussian distributions is also possible.

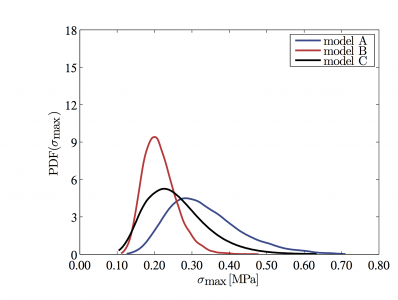

UNCERTAINTY PROPAGATION

- For predictions of numerical models, probability distributions are calculated and confidence intervals are quantified

- We enable a highly efficient calculation of uncertainty propagation based on emulators.

- Furthermore, we have novel multi-fidelity methods for computing the propagation of uncertainties in complex simulation models available.

STATISTICAL MODEL CALIBRATION

- Via statistical calibration, it is possible to make the most of available experimental data and ground the simulation models in reality.

- Statistical calibration allows for quantifying uncertainties in all aspects of the model.

- Statistical calibration also allows for determining discrepancies between the model and the observed data for the optimized calibration parameters.

SENSITIVITY ANALYSIS

- Using sensitivity analysis, the most important model parameters can be identified efficiently.

- We use offer variance-based and screening methods for sensitivity analyses.

INVERSE ANALYSIS / PARAMETER IDENTIFICATION

- Inverse analysis allows for the identification of unknown and not directly measurable parameters of a system, via a model of the system.

- To solve these, mathematically speaking ill-posed problems, we offer probabilistic methods, which provide more reliable results than conventional deterministic methods.

BAYESIAN OPTIMIZATION

- Parameters of very complex and computationally intensive models can also be optimized efficiently using Bayesian optimization.

- Bayesian optimization is particularly beneficial when either function evaluations are expensive or there is not any gradient information available or the problem is not convex or the function evaluations are contaminated with noise.